Ideally, transactions should be short and fast to avoid deadlocks. Also, try to keep the user interaction to minimum levels because it affects the speed.

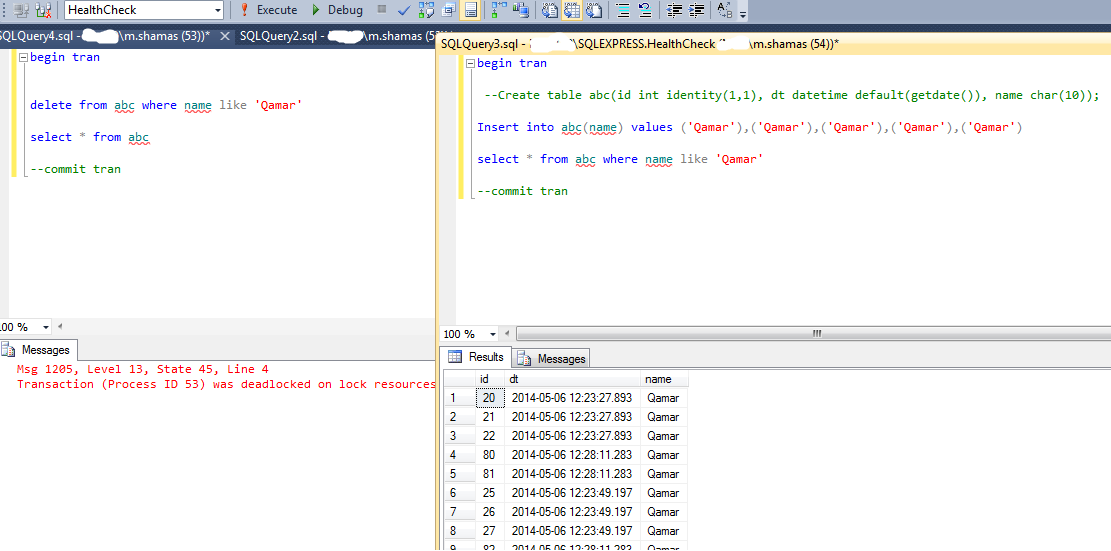

You can restrict the users to input any sorts of data when the transaction is processing and you may update the data prior to the transaction to avoid deadlocks. Deadlock victims are chosen on the basis of Deadlock priority set by Server or rollback cost. Thus, all locks get released and previous sessions are allowed to continue the process. Usually Lock Monitors perform deadlock check and when detected, they select one deadlock victim and roll its transaction back. DBAs should design clear set of rules for accessing Database objects. To minimize deadlocks, all the concurrent transactions should access objects in a well defined order. Keep the order sameĭeadlocks are bound to occur if the resources are not processed in a well defined order. It should be noted that deadlocks directly affect the performance and may halt the processing of database. Some deadlocks are caused because of poorly designed queries too. The most common cause is poor design of database, without proper validation and testing, and lack of indexing. SQL servers are designed to detect the deadlocks automatically, but if they are reported, DBAs should try to understand the reason behind the deadlock. Deadlock troubleīefore jumping to the tips to minimize deadlocks, let’s take a quick glance at some of the most likely causes of deadlocks. It’s not possible to avoid deadlocks altogether but one can surely minimize the chance of creating a deadlock. While working with SQL servers, deadlocks are pretty common and they can hamper the entire process. None of the process can continue as both are locked and require the other one to release the resource or lock. As a result, both are stuck in a deadlock situation. Imagine a situation where one person is asking the second to release a resource and the second is waiting for the first to release. The conflicts where one process awaits the release of another resource are called blocks. Ideally, a database server should be able to retrieve multiple requests but it often results in blocks. I have tried to position this method somewhere else, call SaveChangesAsync() in other areas, even after the import routine, but it does not seem to work.The article suggests important tips to avoid Deadlocks in SQL ServersĪlthough SQL Server has witnessed huge evolution in past couple of years, users still regularly face the situation of deadlocks.

If (existingWorkOrders.TryGetValue(dataEntry.ObjID, out WorkOrder elementToDelete)) Where(wob => !providedObjIDs.Contains(wob.ObjID)) įoreach (DataEntryStruct dataEntry in workOrdersToDelete) This is my DeleteOldWorkOrders-Method: private static void DeleteOldWorkOrders(List batch, Dictionary existingWorkOrders, dsyWorkOrder05TtyWorkOrder erpResults) I can't seem to understand why this entry is locked by another process, it just gets selected and then updated. My SQL Server Profiler says that there is a deadlock on my Update-Statement when setting Deleted = true. Inner exception 1: Transaction (Process ID 99) was deadlocked on lock | communication buffer resources with another process and has been chosen as the deadlock victim. If you are connecting to a SQL Azure database consider using SqlAzureExecutionStrategy. When it comes to the scenario that a workOrder needs to be deleted and im trying to set the deleted field to true, I get this error:Īn exception has been raised that is likely due to a transient failure. Deleted entries that have been removed from ProAlphaĭeleteOldWorkOrders(batch, existingWorkOrders, esbResults) Įverything works fine until the code arrives in my DeleteOldWorkOrders-Method. If (!existingWorkOrders.TryGetValue(currentDetail.Obj, out currentWorkOrder) || currentWorkOrder = null)Īwait currentWorkOrder.ApplyTtyWorkOrder(currentDetail, resourceMapper, smlStorage, language) Task getEsbDataTask = GetBatchFromEsb(batch, client, esbLimiter, clsNamesToImport, language, issueList) Īwait Task.WhenAll(getExistingWorkOrdersTask, getEsbDataTask) ĮxistingWorkOrders = getExistingWorkOrdersTask.Result įoreach (dsyWorkOrder05TtyWorkOrder currentDetail in esbResults) Task> getExistingWorkOrdersTask = GetBatchFromDb(db, dbLimiter, batch) Based on that, I'm either inserting or updating entries from my DB: private static async Task ImportWorkOrderBatch(ĭb.Database.CommandTimeout = ImportDatabaseTimeout The imported IDs coming from my ERP are compared with the ones from my Database. I have an import routine, that imports a lot of data from my ERP into my SQL-Database.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed